Facebook Is Helping Intel To Design AI Deep Learning Xeon Processor

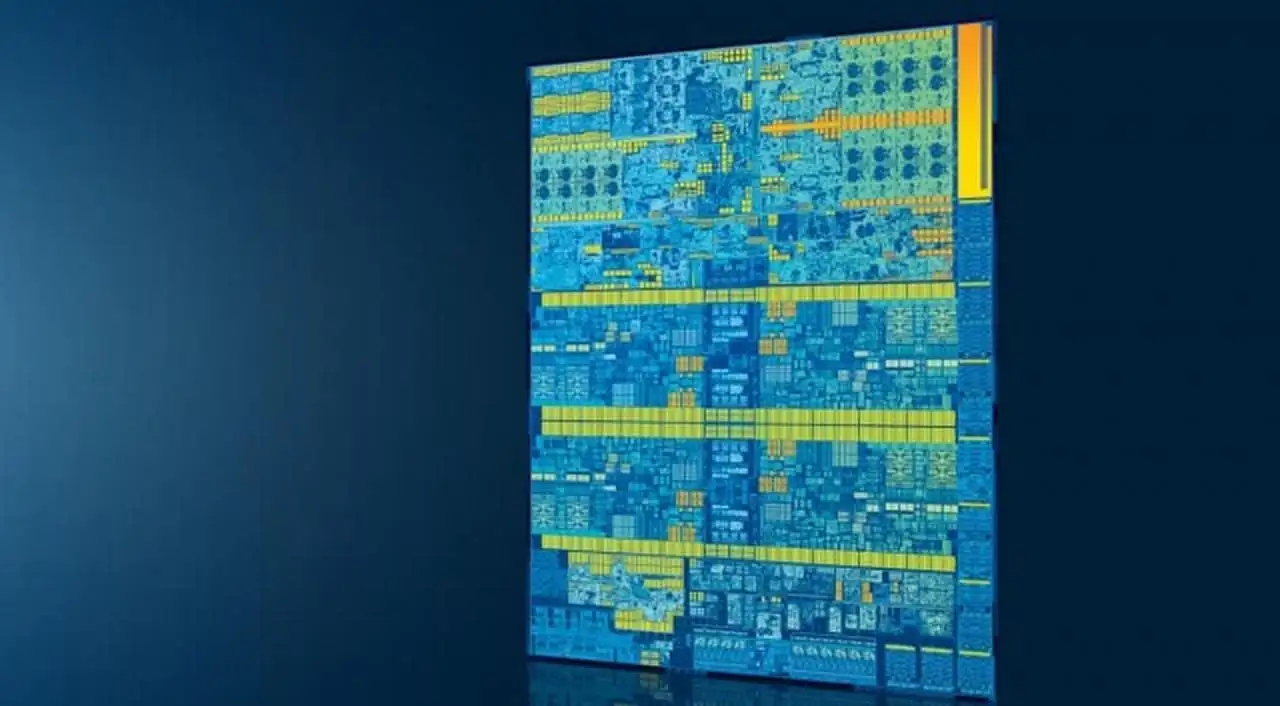

Artificial Intelligence and Deep Learning are making its way to each section of this technology industry. Recently at Open Compute Project Global Summit 2019, Intel announced that they have collaborated with Facebook to design the upcoming Cooper Lake 14nm Xeon processor family.

Table of contents

Facebook is Helping Intel in Deep Learning

According to Jason Waxman, VP of Intel’s Data Center Group and GM of Cloud Platforms Group, Facebook, who is already working on AI and ML for years, is now helping Intel incorporating Bfloat 16 format in Cooper Lake’s design. If you don’t know, Bfloat 16 is a 16-bit floating point representation system used in deep learning training. It speeds up the training especially in tasks like machine translation, image-classification, recommendation engines and speech-recognition.

Read: Difference between AI, Machine Learning and Deep Learning

Bfloat also improves the processor’s performance by providing the same dynamic range as offered by 32-bit floating point representations. The company is also looking to enhancing its two-socket design and will introduce the new platforms with four and eight-socket designs. Considering the speculations, the upcoming Xeon processors family with a four-socket server could have up to 112 cores and 224 threads. The processor will be able to address the 12TB of DC Persistent Memory Modules (DCPMM).

“OCP is a vital organization that brings together a fast-growing community of innovators who are delivering greater choice, customization, and flexibility to IT hardware. As a founding member of this open source community, Intel is committed to delivering innovative products that help deploy infrastructure underlying the services that support the digital economy,” said Jason Waxman at the summit.

It’s important to note that even after using 112 cores and 224 threads, the Cooper Lake processors still behind the 7nm-based AMD EPYC lineup that have 128 cores and 256 threads on a dual-socket motherboard.

As the AI, IoT, clouds and network transformation are taking place, it’s creating a massive amount of unorganized data. Both Open Compute Project (OCP) and Intel are committed to design hardware that would be equipped to manage this unprecedented scale in an efficient way.

Also Read: Is Artificial Intelligence a Part of Computer Science?

Apart from this, Intel will also contribute its RSD 2.3 Rack Management Module code to the OCP community. It’s been more than a year since Intel is making efforts to come up with simple common standards for BIOS, BMC, rack management software through being active in OCP system, OpenBM, and OpenRMC firmware projects.

The company will also release a family of OCPv3.0-complaint network interface controllers (NIC ) in the third quarter of 2019. It will include controllers ranging from 1GbE to 100GbE and next Gen Intel Ethernet with better application performance and more features.