What is Deepfake Technology?

In recent years, deepfake technology has emerged as a groundbreaking application of artificial intelligence (AI) that raises both fascination and concern. Deepfakes refer to synthetic media, typically manipulated audio and video content, created using advanced machine learning algorithms. In this article, we delve into the intricacies of deepfake technology, exploring how it generates synthetic media and examining the ethical and societal implications of this rapidly evolving field.

Table of contents

Understanding Deepfake Technology

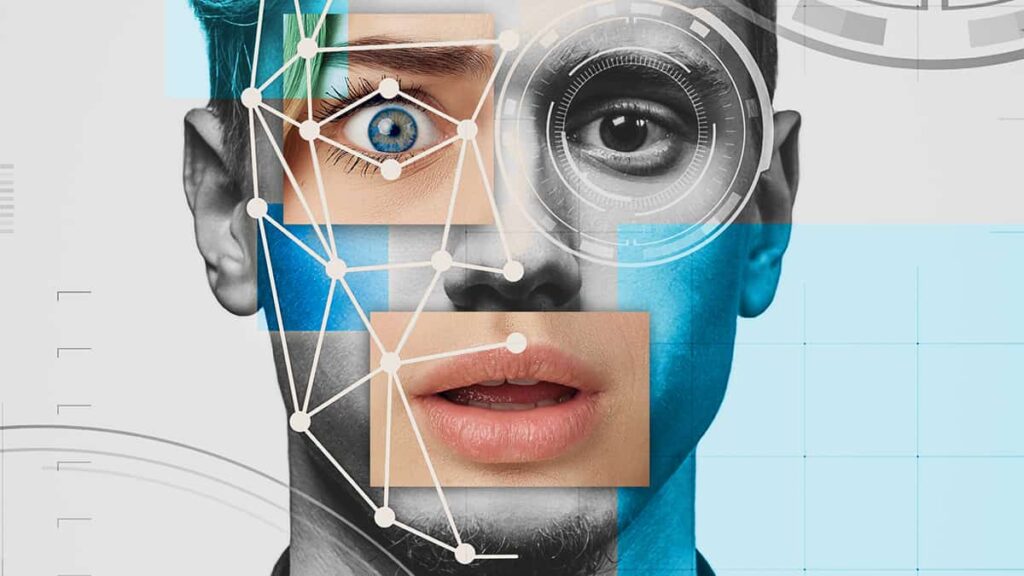

Deepfake technology utilizes deep learning algorithms, particularly generative adversarial networks (GANs), to create realistic yet fabricated audio and video content. GANs consist of two neural networks: a generator network that generates the synthetic media and a discriminator network that evaluates the authenticity of the media. Through an iterative training process, the generator learns to produce increasingly convincing deepfakes while the discriminator learns to distinguish between real and fake content.

The process of generating a deepfake involves several steps. Initially, a dataset comprising thousands of images or videos is fed into the AI model for training. The model learns patterns, facial features, and voice characteristics from the dataset. Once trained, the model can generate synthetic media by swapping faces, altering expressions, or even manipulating speech.

Ethical Concerns and Misuse

Deepfake technology has raised significant ethical concerns. It has the potential to be misused for malicious purposes, such as spreading disinformation, defaming individuals, or impersonating public figures. Deepfake videos can convincingly depict someone saying or doing things they never did, leading to reputational damage, privacy invasion, and the erosion of trust in media and information.

The rise of deepfakes poses significant challenges for journalism and media integrity. With the ability to create convincing fake news videos, deepfakes can undermine the credibility of legitimate news sources. Journalists and media consumers must be vigilant in verifying the authenticity of the content and rely on trusted sources to combat the spread of misinformation. Deepfakes raise serious concerns regarding privacy and consent. Manipulating someone’s likeness without their consent infringes upon their privacy rights and can have severe psychological, social, and legal consequences. Efforts to protect individuals from deepfake abuse necessitate robust legislation and technological countermeasures.

Addressing Challenges & Benefits

Addressing the challenges posed by deepfake technology requires a multi-faceted approach. Technological solutions, such as advanced detection algorithms, watermarking techniques, and media authentication mechanisms, are being developed to identify and mitigate deepfake content. Additionally, raising awareness, media literacy, and critical thinking skills among the general public can help minimize the impact of deepfake manipulation.

Despite the ethical concerns, deepfake technology also offers potential beneficial applications. It can be used in the entertainment, filmmaking, and gaming industries to create realistic special effects and digital avatars. Deepfakes have the potential to revolutionize virtual reality experiences, enhance dubbing or localization processes, and open up new creative possibilities. As the field of deepfakes continues to evolve, the development of regulatory frameworks becomes imperative. Governments and policymakers need to collaborate with technology experts to establish legal guidelines that protect individuals’ rights, preserve media integrity, and foster responsible use of synthetic media technology.

The Takeaway

Deepfake technology presents a double-edged sword, offering exciting possibilities while raising serious ethical and societal concerns. As we navigate this evolving landscape, it is crucial to strike a balance between innovation and safeguarding against misuse. By addressing the ethical implications, promoting media literacy, and fostering collaboration among technology developers, policymakers, and society at large, we can harness the potential of deepfake technology while protecting the integrity of our digital world.