Google Announces Beta Version of Deep Learning Containers For ML Applications

Artificial Intelligence and Machine Learning are some of the futuristic technologies every tech enthusiast is dreaming about. Tech giants like Google and Microsoft are leaving no stone unturned in providing the required resources required to get started with these technologies.

A few days ago, Google announced the availability of the beta version of Deep Learning containers. It’s a new cloud service that aims to provide an environment for the development, testing, and deployment of machine learning applications.

The major highlight of Deep Learning containers is its ability to test machine learning applications within the environment and moving it quickly to the cloud.

If you don’t know, Amazon has also launched a similar service named AWS Deep Learning Containers. It comes with Docker image support for easy deployment of custom machine learning (ML) environments. Now, let’s have a look at some major features of Google’s Deep Learning Containers.

Table of contents

Support PyTorch, TensorFlow scikit-learn and R

The Deep Learning Containers supports machine learning frameworks like PyTorch, TensorFlow 1.13 and TensorFlow 2.0. Unlike the Deep Learning Containers by AWS, it doesn’t support Apache MXNet frameworks but it comes with PyTorch, TensorFlow scikit-learn and R pre-installed. The Deep Learning Containers by Google can run both in the cloud as well as on-premises.

Also Read: Best Python Tools For Machine Learning And Data Science

Docker images now work on cloud and on-premises

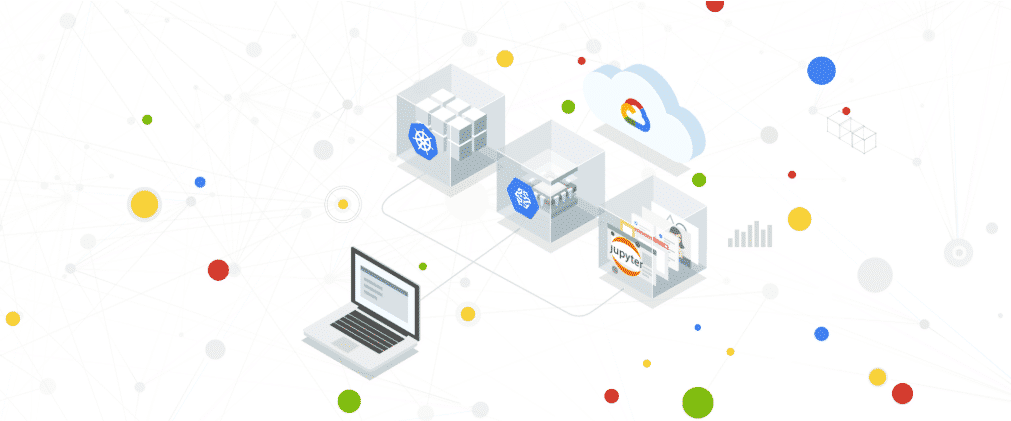

The docker images now also work on the cloud, on-premises and across GCP products and services such as Google Kubernetes Engine (GKE), Compute Engine, AI Platform, Cloud Run, Kubernetes, and Docker Swarm.

Useful Tools and Packages

GCP Deep Learning Containers are equipped with several performance-optimized Docker containers that also brings various tools for running deep learning algorithms. These tools include preconfigured Jupyter Notebooks and Google Kubernetes Engine. While the first offer interactive tools to work, share code, visualizations, equations and text, the later used for deploying multiple containers. Google’s Deep Learning Containers also comes with access to packages and tools such as Nvidia’s CUDA, cuDNN, and NCCL.

“If your development strategy involves a combination of local prototyping and multiple cloud tools, it can often be frustrating to ensure that all the necessary dependencies are packaged correctly and available to every runtime,” said Mike Cheng, a software engineer at Google Cloud.

If you are just getting started, make sure to visit the official website of Google Cloud for all the resources and documentation.

“Deep Learning Containers will provide a consistent environment for testing and deploying your application across GCP products and services, like Cloud AI Platform Notebooks and Google Kubernetes Engine (GKE),” Mike further added.

So, what do you think about Google’s Deep Learning containers? Tell me in the comments below.